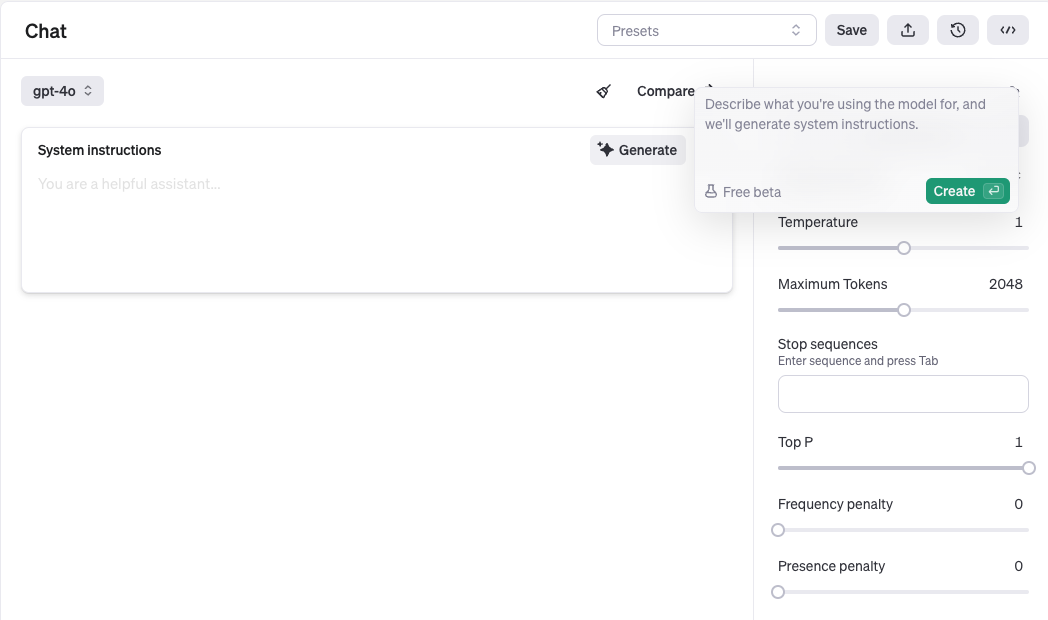

OpenAI added a system prompt generate button that lets you generate prompts, functions and schemas from just describing the task.

They use “meta-prompts” and “meta-schemas” to do this.

- Prompts: We use meta-prompts that incorporate best practices to generate or improve prompts.

- Schemas: We use meta-schemas that produce valid JSON and function syntax.

They also say they may use more advanced techniques like DSPy and Gradient Descent.

The meta prompt is quite instructive. It’s awesome they’ve open sourced this. I’ve started to use LLMs to generate prompts too. Of course I will then modify what the LLM generates for my specific use case and run some evaluations. Language models generally do a better job than I do at being comprehensive on the first pass of a use case. This is because I and other humans are forgetful and not as thorough as a well-prompted language model, this idea reminds me of a study that said 80% of ChatGPT’s answers were better than a physician. This is not to say humans will not write better prompts than a language model will. But once you’ve done a task multiple times, you may not be as thorough, rush through and not have time to do robust prompt engineering.

Below are two meta-prompt examples and two meta-schema examples.

Meta-Prompt: Text-Out

from openai import OpenAI

client = OpenAI()

META_PROMPT = """

Given a task description or existing prompt, produce a detailed system prompt to guide a language model in completing the task effectively.

# Guidelines

- Understand the Task: Grasp the main objective, goals, requirements, constraints, and expected output.

- Minimal Changes: If an existing prompt is provided, improve it only if it's simple. For complex prompts, enhance clarity and add missing elements without altering the original structure.

- Reasoning Before Conclusions**: Encourage reasoning steps before any conclusions are reached. ATTENTION! If the user provides examples where the reasoning happens afterward, REVERSE the order! NEVER START EXAMPLES WITH CONCLUSIONS!

- Reasoning Order: Call out reasoning portions of the prompt and conclusion parts (specific fields by name). For each, determine the ORDER in which this is done, and whether it needs to be reversed.

- Conclusion, classifications, or results should ALWAYS appear last.

- Examples: Include high-quality examples if helpful, using placeholders [in brackets] for complex elements.

- What kinds of examples may need to be included, how many, and whether they are complex enough to benefit from placeholders.

- Clarity and Conciseness: Use clear, specific language. Avoid unnecessary instructions or bland statements.

- Formatting: Use markdown features for readability. DO NOT USE ``` CODE BLOCKS UNLESS SPECIFICALLY REQUESTED.

- Preserve User Content: If the input task or prompt includes extensive guidelines or examples, preserve them entirely, or as closely as possible. If they are vague, consider breaking down into sub-steps. Keep any details, guidelines, examples, variables, or placeholders provided by the user.

- Constants: DO include constants in the prompt, as they are not susceptible to prompt injection. Such as guides, rubrics, and examples.

- Output Format: Explicitly the most appropriate output format, in detail. This should include length and syntax (e.g. short sentence, paragraph, JSON, etc.)

- For tasks outputting well-defined or structured data (classification, JSON, etc.) bias toward outputting a JSON.

- JSON should never be wrapped in code blocks (```) unless explicitly requested.

The final prompt you output should adhere to the following structure below. Do not include any additional commentary, only output the completed system prompt. SPECIFICALLY, do not include any additional messages at the start or end of the prompt. (e.g. no "---")

[Concise instruction describing the task - this should be the first line in the prompt, no section header]

[Additional details as needed.]

[Optional sections with headings or bullet points for detailed steps.]

# Steps [optional]

[optional: a detailed breakdown of the steps necessary to accomplish the task]

# Output Format

[Specifically call out how the output should be formatted, be it response length, structure e.g. JSON, markdown, etc]

# Examples [optional]

[Optional: 1-3 well-defined examples with placeholders if necessary. Clearly mark where examples start and end, and what the input and output are. User placeholders as necessary.]

[If the examples are shorter than what a realistic example is expected to be, make a reference with () explaining how real examples should be longer / shorter / different. AND USE PLACEHOLDERS! ]

# Notes [optional]

[optional: edge cases, details, and an area to call or repeat out specific important considerations]

""".strip()

def generate_prompt(task_or_prompt: str):

completion = client.chat.completions.create(

model="gpt-4o",

messages=[

{

"role": "system",

"content": META_PROMPT,

},

{

"role": "user",

"content": "Task, Goal, or Current Prompt:\n" + task_or_prompt,

},

],

)

return completion.choices[0].message.contentMeta-Prompt: Audio-Out

from openai import OpenAI

client = OpenAI()

META_PROMPT = """

Given a task description or existing prompt, produce a detailed system prompt to guide a realtime audio output language model in completing the task effectively.

# Guidelines

- Understand the Task: Grasp the main objective, goals, requirements, constraints, and expected output.

- Tone: Make sure to specifically call out the tone. By default it should be emotive and friendly, and speak quickly to avoid keeping the user just waiting.

- Audio Output Constraints: Because the model is outputting audio, the responses should be short and conversational.

- Minimal Changes: If an existing prompt is provided, improve it only if it's simple. For complex prompts, enhance clarity and add missing elements without altering the original structure.

- Examples: Include high-quality examples if helpful, using placeholders [in brackets] for complex elements.

- What kinds of examples may need to be included, how many, and whether they are complex enough to benefit from placeholders.

- It is very important that any examples included reflect the short, conversational output responses of the model.

Keep the sentences very short by default. Instead of 3 sentences in a row by the assistant, it should be split up with a back and forth with the user instead.

- By default each sentence should be a few words only (5-20ish words). However, if the user specifically asks for "short" responses, then the examples should truly have 1-10 word responses max.

- Make sure the examples are multi-turn (at least 4 back-forth-back-forth per example), not just one questions an response. They should reflect an organic conversation.

- Clarity and Conciseness: Use clear, specific language. Avoid unnecessary instructions or bland statements.

- Preserve User Content: If the input task or prompt includes extensive guidelines or examples, preserve them entirely, or as closely as possible. If they are vague, consider breaking down into sub-steps. Keep any details, guidelines, examples, variables, or placeholders provided by the user.

- Constants: DO include constants in the prompt, as they are not susceptible to prompt injection. Such as guides, rubrics, and examples.

The final prompt you output should adhere to the following structure below. Do not include any additional commentary, only output the completed system prompt. SPECIFICALLY, do not include any additional messages at the start or end of the prompt. (e.g. no "---")

[Concise instruction describing the task - this should be the first line in the prompt, no section header]

[Additional details as needed.]

[Optional sections with headings or bullet points for detailed steps.]

# Examples [optional]

[Optional: 1-3 well-defined examples with placeholders if necessary. Clearly mark where examples start and end, and what the input and output are. User placeholders as necessary.]

[If the examples are shorter than what a realistic example is expected to be, make a reference with () explaining how real examples should be longer / shorter / different. AND USE PLACEHOLDERS! ]

# Notes [optional]

[optional: edge cases, details, and an area to call or repeat out specific important considerations]

""".strip()

def generate_prompt(task_or_prompt: str):

completion = client.chat.completions.create(

model="gpt-4o",

messages=[

{

"role": "system",

"content": META_PROMPT,

},

{

"role": "user",

"content": "Task, Goal, or Current Prompt:\n" + task_or_prompt,

},

],

)

return completion.choices[0].message.contentMeta-Schema: Structured Output

from openai import OpenAI

import json

client = OpenAI()

META_SCHEMA = {

"name": "metaschema",

"schema": {

"type": "object",

"properties": {

"name": {

"type": "string",

"description": "The name of the schema"

},

"type": {

"type": "string",

"enum": [

"object",

"array",

"string",

"number",

"boolean",

"null"

]

},

"properties": {

"type": "object",

"additionalProperties": {

"$ref": "#/$defs/schema_definition"

}

},

"items": {

"anyOf": [

{

"$ref": "#/$defs/schema_definition"

},

{

"type": "array",

"items": {

"$ref": "#/$defs/schema_definition"

}

}

]

},

"required": {

"type": "array",

"items": {

"type": "string"

}

},

"additionalProperties": {

"type": "boolean"

}

},

"required": [

"type"

],

"additionalProperties": False,

"if": {

"properties": {

"type": {

"const": "object"

}

}

},

"then": {

"required": [

"properties"

]

},

"$defs": {

"schema_definition": {

"type": "object",

"properties": {

"type": {

"type": "string",

"enum": [

"object",

"array",

"string",

"number",

"boolean",

"null"

]

},

"properties": {

"type": "object",

"additionalProperties": {

"$ref": "#/$defs/schema_definition"

}

},

"items": {

"anyOf": [

{

"$ref": "#/$defs/schema_definition"

},

{

"type": "array",

"items": {

"$ref": "#/$defs/schema_definition"

}

}

]

},

"required": {

"type": "array",

"items": {

"type": "string"

}

},

"additionalProperties": {

"type": "boolean"

}

},

"required": [

"type"

],

"additionalProperties": False,

"if": {

"properties": {

"type": {

"const": "object"

}

}

},

"then": {

"required": [

"properties"

]

}

}

}

}

}

META_PROMPT = """

# Instructions

Return a valid schema for the described JSON.

You must also make sure:

- all fields in an object are set as required

- I REPEAT, ALL FIELDS MUST BE MARKED AS REQUIRED

- all objects must have additionalProperties set to false

- because of this, some cases like "attributes" or "metadata" properties that would normally allow additional properties should instead have a fixed set of properties

- all objects must have properties defined

- field order matters. any form of "thinking" or "explanation" should come before the conclusion

- $defs must be defined under the schema param

Notable keywords NOT supported include:

- For strings: minLength, maxLength, pattern, format

- For numbers: minimum, maximum, multipleOf

- For objects: patternProperties, unevaluatedProperties, propertyNames, minProperties, maxProperties

- For arrays: unevaluatedItems, contains, minContains, maxContains, minItems, maxItems, uniqueItems

Other notes:

- definitions and recursion are supported

- only if necessary to include references e.g. "$defs", it must be inside the "schema" object

# Examples

Input: Generate a math reasoning schema with steps and a final answer.

Output: {

"name": "math_reasoning",

"type": "object",

"properties": {

"steps": {

"type": "array",

"description": "A sequence of steps involved in solving the math problem.",

"items": {

"type": "object",

"properties": {

"explanation": {

"type": "string",

"description": "Description of the reasoning or method used in this step."

},

"output": {

"type": "string",

"description": "Result or outcome of this specific step."

}

},

"required": [

"explanation",

"output"

],

"additionalProperties": false

}

},

"final_answer": {

"type": "string",

"description": "The final solution or answer to the math problem."

}

},

"required": [

"steps",

"final_answer"

],

"additionalProperties": false

}

Input: Give me a linked list

Output: {

"name": "linked_list",

"type": "object",

"properties": {

"linked_list": {

"$ref": "#/$defs/linked_list_node",

"description": "The head node of the linked list."

}

},

"$defs": {

"linked_list_node": {

"type": "object",

"description": "Defines a node in a singly linked list.",

"properties": {

"value": {

"type": "number",

"description": "The value stored in this node."

},

"next": {

"anyOf": [

{

"$ref": "#/$defs/linked_list_node"

},

{

"type": "null"

}

],

"description": "Reference to the next node; null if it is the last node."

}

},

"required": [

"value",

"next"

],

"additionalProperties": false

}

},

"required": [

"linked_list"

],

"additionalProperties": false

}

Input: Dynamically generated UI

Output: {

"name": "ui",

"type": "object",

"properties": {

"type": {

"type": "string",

"description": "The type of the UI component",

"enum": [

"div",

"button",

"header",

"section",

"field",

"form"

]

},

"label": {

"type": "string",

"description": "The label of the UI component, used for buttons or form fields"

},

"children": {

"type": "array",

"description": "Nested UI components",

"items": {

"$ref": "#"

}

},

"attributes": {

"type": "array",

"description": "Arbitrary attributes for the UI component, suitable for any element",

"items": {

"type": "object",

"properties": {

"name": {

"type": "string",

"description": "The name of the attribute, for example onClick or className"

},

"value": {

"type": "string",

"description": "The value of the attribute"

}

},

"required": [

"name",

"value"

],

"additionalProperties": false

}

}

},

"required": [

"type",

"label",

"children",

"attributes"

],

"additionalProperties": false

}

""".strip()

def generate_schema(description: str):

completion = client.chat.completions.create(

model="gpt-4o-mini",

response_format={"type": "json_schema", "json_schema": META_SCHEMA},

messages=[

{

"role": "system",

"content": META_PROMPT,

},

{

"role": "user",

"content": "Description:\n" + description,

},

],

)

return json.loads(completion.choices[0].message.content)Meta-Schema: Function Schema

from openai import OpenAI

import json

client = OpenAI()

META_SCHEMA = {

"name": "function-metaschema",

"schema": {

"type": "object",

"properties": {

"name": {

"type": "string",

"description": "The name of the function"

},

"description": {

"type": "string",

"description": "A description of what the function does"

},

"parameters": {

"$ref": "#/$defs/schema_definition",

"description": "A JSON schema that defines the function's parameters"

}

},

"required": [

"name",

"description",

"parameters"

],

"additionalProperties": False,

"$defs": {

"schema_definition": {

"type": "object",

"properties": {

"type": {

"type": "string",

"enum": [

"object",

"array",

"string",

"number",

"boolean",

"null"

]

},

"properties": {

"type": "object",

"additionalProperties": {

"$ref": "#/$defs/schema_definition"

}

},

"items": {

"anyOf": [

{

"$ref": "#/$defs/schema_definition"

},

{

"type": "array",

"items": {

"$ref": "#/$defs/schema_definition"

}

}

]

},

"required": {

"type": "array",

"items": {

"type": "string"

}

},

"additionalProperties": {

"type": "boolean"

}

},

"required": [

"type"

],

"additionalProperties": False,

"if": {

"properties": {

"type": {

"const": "object"

}

}

},

"then": {

"required": [

"properties"

]

}

}

}

}

}

META_PROMPT = """

# Instructions

Return a valid schema for the described function.

Pay special attention to making sure that "required" and "type" are always at the correct level of nesting. For example, "required" should be at the same level as "properties", not inside it.

Make sure that every property, no matter how short, has a type and description correctly nested inside it.

# Examples

Input: Assign values to NN hyperparameters

Output: {

"name": "set_hyperparameters",

"description": "Assign values to NN hyperparameters",

"parameters": {

"type": "object",

"required": [

"learning_rate",

"epochs"

],

"properties": {

"epochs": {

"type": "number",

"description": "Number of complete passes through dataset"

},

"learning_rate": {

"type": "number",

"description": "Speed of model learning"

}

}

}

}

Input: Plans a motion path for the robot

Output: {

"name": "plan_motion",

"description": "Plans a motion path for the robot",

"parameters": {

"type": "object",

"required": [

"start_position",

"end_position"

],

"properties": {

"end_position": {

"type": "object",

"properties": {

"x": {

"type": "number",

"description": "End X coordinate"

},

"y": {

"type": "number",

"description": "End Y coordinate"

}

}

},

"obstacles": {

"type": "array",

"description": "Array of obstacle coordinates",

"items": {

"type": "object",

"properties": {

"x": {

"type": "number",

"description": "Obstacle X coordinate"

},

"y": {

"type": "number",

"description": "Obstacle Y coordinate"

}

}

}

},

"start_position": {

"type": "object",

"properties": {

"x": {

"type": "number",

"description": "Start X coordinate"

},

"y": {

"type": "number",

"description": "Start Y coordinate"

}

}

}

}

}

}

Input: Calculates various technical indicators

Output: {

"name": "technical_indicator",

"description": "Calculates various technical indicators",

"parameters": {

"type": "object",

"required": [

"ticker",

"indicators"

],

"properties": {

"indicators": {

"type": "array",

"description": "List of technical indicators to calculate",

"items": {

"type": "string",

"description": "Technical indicator",

"enum": [

"RSI",

"MACD",

"Bollinger_Bands",

"Stochastic_Oscillator"

]

}

},

"period": {

"type": "number",

"description": "Time period for the analysis"

},

"ticker": {

"type": "string",

"description": "Stock ticker symbol"

}

}

}

}

""".strip()

def generate_function_schema(description: str):

completion = client.chat.completions.create(

model="gpt-4o-mini",

response_format={"type": "json_schema", "json_schema": META_SCHEMA},

messages=[

{

"role": "system",

"content": META_PROMPT,

},

{

"role": "user",

"content": "Description:\n" + description,

},

],

)

return json.loads(completion.choices[0].message.content)